There is a lot of focus in AI right now on capability.

New models, better benchmarks, faster performance. Each step forward is meaningful, and the progress is real.

But building systems with these tools has a way of shifting your perspective.

The challenge is not whether a model can produce a good result.

The challenge is whether a system can produce consistent results over time.

That difference becomes clear very quickly when you move beyond simple use cases.

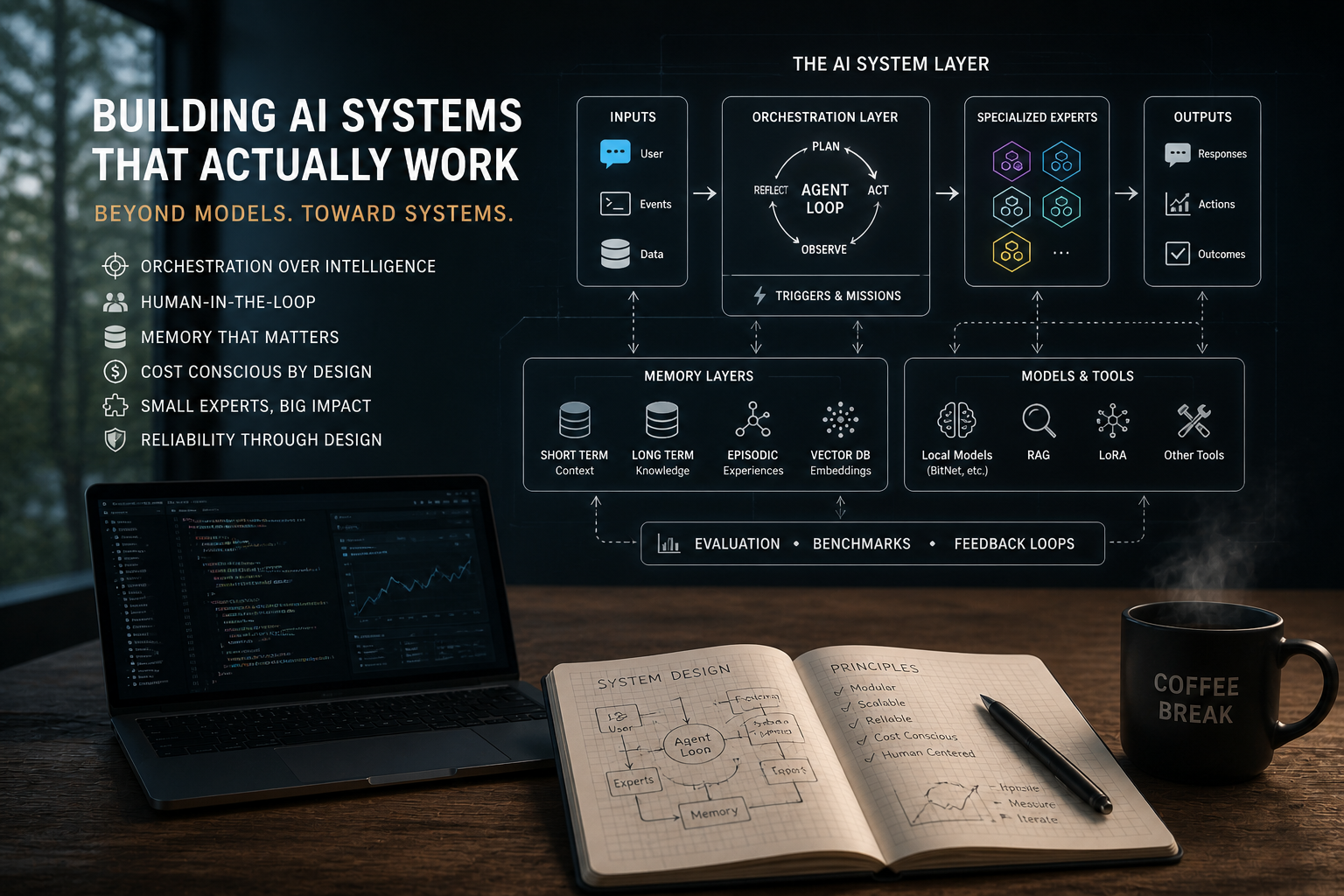

Lately I’ve been spending time working through this in a few different areas. Improving user experience, refining agent loops, and thinking about how systems should operate as they grow more complex. Not just reacting to a single input, but handling sequences of actions, maintaining intent, and adapting as conditions change.

That introduces a different set of problems.

How do you manage memory in a way that is useful but not overwhelming?

How do you control cost as systems scale?

How do you ensure that outputs from one step align with the next?

How do you decide when a human should be involved?

These are not model problems. They are system problems.

There is also a growing realization that bigger is not always better.

Large models are powerful, but they are not always efficient or necessary. There is increasing value in smaller, more specialized models that can be fine-tuned for specific tasks. Approaches like LoRA, retrieval-augmented generation, and even older ideas like modular or expert-based systems are becoming more relevant again.

The goal is not to build a single system that does everything.

It is to coordinate multiple components that each do something well.

That requires orchestration.

It also requires a shift in mindset.

Instead of chasing perfect outputs, the focus becomes building systems that can handle imperfection. Systems that can recover, validate, and adjust as they operate.

In practice, that means accepting that things will not work perfectly the first time.

Or the second.

Or even consistently over time.

Progress becomes iterative. Measured in small improvements rather than large breakthroughs.

This is not as visible as a new model release. It does not produce the same kind of headlines.

But it is where most of the real work is happening.

As AI continues to evolve, the differentiator will not be access to capability.

It will be the ability to turn that capability into systems that are reliable, cost-effective, and aligned with real-world use.

That is a harder problem.

And it is the one worth solving.

☕